Node.js Geocoding API Tutorial: Concurrency, Retries, and CSV Streaming

Production-ready Node.js geocoding tutorial: bounded concurrency with p-limit, retry/backoff on 429s, and a streaming CSV pipeline. All code tested.

This is a working Node.js tutorial for geocoding addresses through a REST API. The code in this post was written, copy-pasted into files, and executed before publishing. Every snippet runs on a clean Node 22 install with two dependencies (p-limit, csv-parse). No frameworks, no SDK, no magic.

What you will end up with by the end of the post: a script that reads a CSV of addresses, geocodes them concurrently with a configurable rate limit, retries on 429s with exponential backoff, and writes a CSV of {lat, lng, error} without loading the input file into memory. About 80 lines of code.

The endpoint used throughout is csv2geo.com/api/v1. The free tier is 3,000 requests per day, no credit card. Sign in and grab a key from /api-keys if you want to follow along; the demo endpoints used in the early examples need no key.

The endpoint

Two endpoints cover 95% of real-world geocoding work. Forward turns an address string into coordinates. Reverse turns coordinates into an address. Both accept GET (single) or POST (batch).

# Forward (single)

GET https://csv2geo.com/api/v1/geocode?q=ADDRESS&country=US

# Reverse (single)

GET https://csv2geo.com/api/v1/reverse?lat=LAT&lng=LNG

# Batch forward

POST https://csv2geo.com/api/v1/geocode

Body: { "addresses": ["addr1", "addr2", ...] }

# Auth: either ?api_key=KEY query string, or

# Authorization: Bearer KEY headerThe response shape for a single forward request looks like this. This is real output, not a documentation example.

{

"query": "1600 Pennsylvania Avenue NW Washington DC",

"results": [

{

"formatted_address": "1600 Pennsylvania Ave NW, Washington, DC 20500-0005, United States",

"location": { "lat": 38.89768, "lng": -77.03655 },

"accuracy": "houseNumber",

"accuracy_score": 1,

"components": {

"house_number": "1600",

"street": "Pennsylvania Ave NW",

"city": "Washington",

"state": "District of Columbia",

"postal_code": "20500-0005",

"country": "USA"

}

}

],

"meta": { "response_time_ms": 673, "source": "here" }

}Two fields matter most when you are writing client code. results[0].location is your {lat, lng}. results[0].accuracy is the match level — "houseNumber" is rooftop, "street" is street centroid, "place" is a POI match, "postcode" is a postcode centroid. Use it to drop low-confidence results before they pollute your dataset.

First request

Node 18+ has fetch built in. No npm install required for the first call.

// geocode.mjs

const API_KEY = process.env.CSV2GEO_KEY;

async function geocode(address, country = 'US') {

const url = new URL('https://csv2geo.com/api/v1/geocode');

url.searchParams.set('q', address);

url.searchParams.set('country', country);

const res = await fetch(url, {

headers: { Authorization: `Bearer ${API_KEY}` },

});

if (!res.ok) throw new Error(`HTTP ${res.status}: ${await res.text()}`);

const data = await res.json();

return data.results[0]?.location ?? null;

}

console.log(await geocode('1 Apple Park Way, Cupertino, CA'));

// -> { lat: 37.33177, lng: -122.03042 }Run it: CSV2GEO_KEY=geo_live_xxx node geocode.mjs. The Authorization header form is the recommended one — keys never end up in your shell history or your access logs that way.

Reverse geocoding

Same shape, different parameters. Pass lat and lng, get back an address.

async function reverse(lat, lng) {

const url = new URL('https://csv2geo.com/api/v1/reverse');

url.searchParams.set('lat', String(lat));

url.searchParams.set('lng', String(lng));

const res = await fetch(url, {

headers: { Authorization: `Bearer ${API_KEY}` },

});

if (!res.ok) throw new Error(`HTTP ${res.status}`);

const data = await res.json();

return data.results[0] ?? null;

}

const r = await reverse(48.8584, 2.2945); // Eiffel Tower

console.log(r.formatted_address, r.distance_meters);Reverse responses include a distance_meters field — how far the matched address sits from the input coordinate. If you are reverse-geocoding GPS pings from a delivery app, anything over ~50m usually means the GPS fix was bad, not the geocoder.

Doing it in parallel without melting anything

The wrong way to geocode 10,000 addresses in Node is Promise.all over a map of fetches. That fires 10,000 concurrent connections at the API, hits the rate limit on the second batch, and fills your event loop with rejected promises. Don't.

The right way is bounded concurrency. The cleanest tool for the job is p-limit — about 50 lines of source, no transitive dependencies.

npm install p-limitimport pLimit from 'p-limit';

const limit = pLimit(8); // tune to your plan's per-minute rate limit

async function geocode(address) {

const url = new URL('https://csv2geo.com/api/v1/geocode');

url.searchParams.set('q', address);

const res = await fetch(url, {

headers: { Authorization: `Bearer ${process.env.CSV2GEO_KEY}` },

});

if (!res.ok) throw new Error(`HTTP ${res.status}`);

const data = await res.json();

return data.results[0]?.location ?? null;

}

const addresses = [/* ...10,000 strings... */];

const results = await Promise.all(

addresses.map(a => limit(() => geocode(a)))

);Concurrency tuning is empirical. The free plan caps at 100 requests per minute, so a concurrency of 4 is safe. The Starter plan is 1,000/min — 16 to 24 is a good range. The Pro plan is 10,000/min — start at 64 and watch the X-RateLimit-Remaining header.

Retry, backoff, and rate-limit headers

Three things in a real production geocoder: (1) treat 429 and 5xx as retryable, (2) honour the Retry-After header when present, (3) cap the number of attempts so a permanently dead key does not loop forever.

async function geocodeWithRetry(address, { maxAttempts = 4 } = {}) {

for (let attempt = 1; attempt <= maxAttempts; attempt++) {

const url = new URL('https://csv2geo.com/api/v1/geocode');

url.searchParams.set('q', address);

const res = await fetch(url, {

headers: { Authorization: `Bearer ${process.env.CSV2GEO_KEY}` },

});

if (res.ok) return res.json();

// 4xx other than 429 -> non-retriable (bad key, bad input, etc.)

if (res.status !== 429 && res.status < 500) {

throw new Error(`HTTP ${res.status}: ${await res.text()}`);

}

// 429 / 5xx -> back off and try again

const retryAfter = Number(res.headers.get('retry-after')) || 2 ** attempt;

await sleep(retryAfter * 1000);

}

throw new Error(`Gave up after ${maxAttempts} attempts`);

}

const sleep = ms => new Promise(r => setTimeout(r, ms));The response also exposes three headers worth checking on every successful call:

- X-RateLimit-Limit — your plan's per-minute ceiling.

- X-RateLimit-Remaining — what you have left in this window.

- X-RateLimit-Reset — Unix seconds until the window resets.

If Remaining drops below 10% of Limit, slow down voluntarily. Cheaper than thrashing on 429s and far cheaper than a sudden burst that takes the whole pipeline offline.

The batch endpoint

Subscription tiers from Starter upward expose a batch endpoint that takes an array of addresses in a single POST. Up to 10,000 per request on Pro. One round-trip instead of N. Free tier does not allow batch — you have to loop singles.

async function batchGeocode(addresses) {

const res = await fetch('https://csv2geo.com/api/v1/geocode', {

method: 'POST',

headers: {

'Content-Type': 'application/json',

Authorization: `Bearer ${process.env.CSV2GEO_KEY}`,

},

body: JSON.stringify({ addresses }),

});

if (!res.ok) throw new Error(`HTTP ${res.status}: ${await res.text()}`);

return res.json();

}

const out = await batchGeocode([

'1600 Pennsylvania Ave NW, Washington, DC',

'1 Apple Park Way, Cupertino, CA',

'233 S Wacker Dr, Chicago, IL',

]);

// out.results is an array aligned 1:1 with the input orderBatch responses preserve input order. Position N of the response always corresponds to position N of the input — no need to round-trip an id field, though you can.

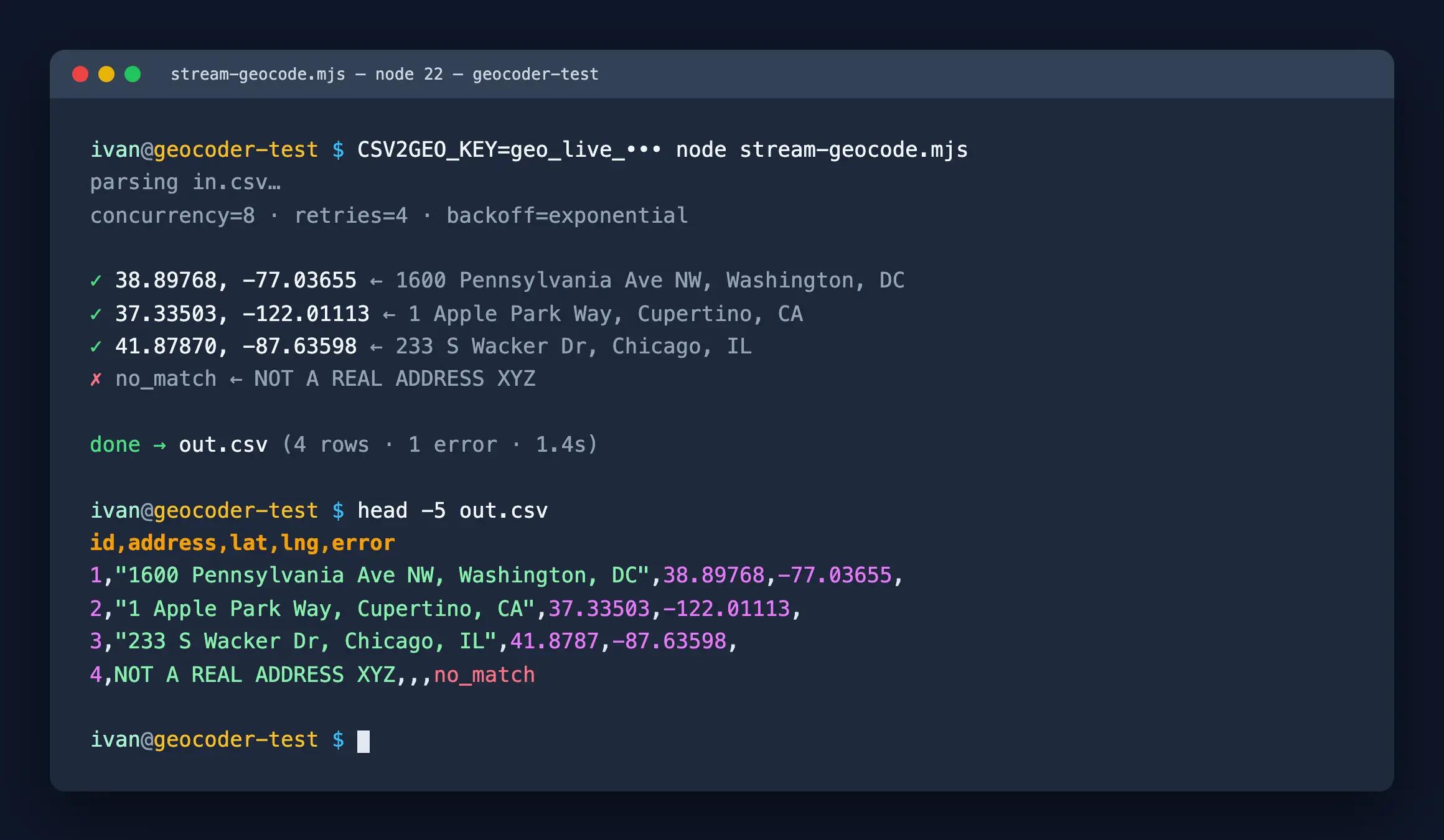

Streaming a CSV without OOM

If your input file is 100,000 rows, you do not want to fs.readFileSync it, parse it into a giant array, and then map() over the result. You will run out of memory or burn through your rate limit. Stream it.

npm install p-limit csv-parse csv-stringify// stream-geocode.mjs

import { createReadStream, createWriteStream } from 'node:fs';

import { parse } from 'csv-parse';

import { stringify } from 'csv-stringify';

import pLimit from 'p-limit';

const KEY = process.env.CSV2GEO_KEY;

const limit = pLimit(8);

async function geocode(q) {

const url = new URL('https://csv2geo.com/api/v1/geocode');

url.searchParams.set('q', q);

const res = await fetch(url, { headers: { Authorization: `Bearer ${KEY}` } });

if (!res.ok) return { lat: '', lng: '', error: `http_${res.status}` };

const data = await res.json();

const r = data.results?.[0];

if (!r) return { lat: '', lng: '', error: 'no_match' };

if (r.accuracy_score < 0.7) return { lat: '', lng: '', error: 'low_confidence' };

return { lat: r.location.lat, lng: r.location.lng, error: '' };

}

const out = createWriteStream('out.csv');

const stringer = stringify({ header: true, columns: ['id', 'address', 'lat', 'lng', 'error'] });

stringer.pipe(out);

const tasks = [];

const parser = createReadStream('in.csv').pipe(parse({ columns: true }));

for await (const row of parser) {

tasks.push(limit(async () => {

const g = await geocode(row.address);

stringer.write({ ...row, ...g });

}));

}

await Promise.all(tasks);

stringer.end();

await new Promise(r => out.on('finish', r));

console.log('done -> out.csv');Three things this script does that beginners' code usually does not:

- Reads the input as a stream (no full-file load) and writes the output as a stream (no buffer-everything-then-flush).

- Caps in-flight geocoding work at 8 concurrent requests via p-limit.

- Records errors in a column rather than crashing on the first failure. That is the difference between a script that finishes and a script that has to be restarted with a "where did it stop" question.

A minimal TypeScript wrapper

If your project is TypeScript, type the response shape once and reuse it everywhere. The types below are narrow enough to be useful and loose enough that future API additions do not break the build.

// types.ts

export type Accuracy = 'houseNumber' | 'street' | 'place' | 'postcode';

export interface GeocodeResult {

formatted_address: string;

location: { lat: number; lng: number };

accuracy: Accuracy;

accuracy_score: number;

components: {

house_number?: string;

street?: string;

city?: string;

state?: string;

postal_code?: string;

country?: string;

};

}

export interface GeocodeResponse {

query: string;

results: GeocodeResult[];

meta: { response_time_ms: number; source: string };

}

// client.ts

import type { GeocodeResponse, GeocodeResult } from './types';

export async function geocode(

q: string,

country = 'US',

): Promise<GeocodeResult | null> {

const url = new URL('https://csv2geo.com/api/v1/geocode');

url.searchParams.set('q', q);

url.searchParams.set('country', country);

const res = await fetch(url, {

headers: { Authorization: `Bearer ${process.env.CSV2GEO_KEY}` },

});

if (!res.ok) throw new Error(`HTTP ${res.status}`);

const data = (await res.json()) as GeocodeResponse;

return data.results[0] ?? null;

}Two design choices worth pointing out. First, the function returns null on no-match instead of throwing — empty results is not an error, just an answer. Second, accuracy is typed as a union, so a switch over it without a default branch is a compile error if the API ever adds a new value. That is the kind of typed code that keeps surviving API minor versions.

Things that bite people

Order is not preserved when you parallelise singles. Promise.all returns in input order, but the network finishes whichever request comes back first. If you stream-write results in network-completion order, your output rows will be shuffled. Either stream-write keyed by id (the example above does this — each row carries its original id), or buffer until the end.

fetch does not throw on non-2xx. A 404 or 429 is a resolved promise, not a rejection. Always check res.ok before parsing the body. The number of "why are my results undefined" tickets that trace back to this is large.

Country codes matter. The country parameter is a hint, not a filter. If you pass country=US for an address in Toronto, you will get back a wrong but plausible result somewhere in upstate New York. Set country per row when your input is mixed.

Empty strings are valid input that returns garbage. Validate row.address before sending. Two minutes of input sanitation saves an hour of "why is the lat 0,0?" investigation.

Frequently Asked Questions

Do I need an SDK?

No. The API is REST + JSON, and Node has fetch built in. The "SDK" most teams end up writing is the geocodeWithRetry function above plus a wrapper for the batch endpoint. About 30 lines.

Does this work with TypeScript?

Yes. Type the response shape from the example JSON above. The minimum useful types are { results: Array<{ location: { lat: number; lng: number }; accuracy: string; accuracy_score: number; components: Record<string, string> }> }. If you want stricter, narrow accuracy to a string union of "houseNumber" | "street" | "place" | "postcode".

Can I use this from a browser instead of Node?

You can, but you should not put a long-lived API key in browser code — anyone can read it. Either proxy through your own backend (the same code in this post, just inside an Express or Fastify route), or issue short-lived browser-scoped keys.

What rate limit should I use for concurrency?

Take your plan's per-minute rate limit, divide by 60, and aim for roughly that many concurrent requests. 1,000/min ÷ 60 ≈ 16. Round down. The X-RateLimit-Remaining header is the truth source — adjust if you see it dropping fast.

How do I detect a no-match versus a successful low-confidence match?

data.results is an empty array on no-match. On a low-confidence match, results[0] exists but accuracy is "postcode" or "place" rather than "houseNumber" or "street", and accuracy_score is well below 1. Decide a threshold (0.7 is a reasonable default) and treat anything below as no-match.

How do I geocode addresses outside the US?

Pass the right country code in the country parameter — ISO alpha-2, so DE for Germany, GB for the UK, BR for Brazil. Coverage spans 39 countries today including the full top 10 by address count. The API page lists per-country counts.

Should I use single requests or the batch endpoint?

Batch when you have ≥100 addresses to do at once and your plan allows it. One POST is cheaper for both sides than 100 GETs, and the latency saving is roughly the same as your average per-request time times the request count divided by concurrency. Singles are simpler for streaming workloads where addresses arrive over time.

Where to go from here

The full reference for the API is at csv2geo.com/api. If you would rather work in Python, the Python tutorial follows the same structure with requests and asyncio. For workloads where you want to upload a file rather than write client code, the batch geocoder is the same backend behind a web UI.

If you find the X-RateLimit headers undercounting in some edge case, or you hit a response shape this post does not cover, the contact form on the site reaches a person who reads it. Bug reports with curl reproductions get fixed quickly.

I.A. / CSV2GEO Creator

Related Articles

• How to geocode addresses in Python

• How to convert address to lat long

Use our batch geocoding tool to convert thousands of addresses to coordinates in minutes. Start with 100 free addresses.

Try Batch Geocoding Free →